Bionic glasses for poor vision

A set of glasses packed with technology normally seen in smartphones and games consoles is the main draw at one of the featured stands at this year’s Royal Society Summer Science Exhibition.

But the exhibit isn’t about the latest gadget must-have, it’s all about aiding those with poor vision and giving them greater independence.

‘We want to be able to enhance vision in those who’ve lost it or who have little left or almost none,’ explains Dr Stephen Hicks of the Department of Clinical Neurology at Oxford University. ‘The glasses should allow people to be more independent – finding their own directions and signposts, and spotting warning signals,’ he says.

Technology developed for mobile phones and computer gaming – such as video cameras, position detectors, face recognition and tracking software, and depth sensors – is now readily and cheaply available. So Oxford researchers have been looking at ways that this technology can be combined into a normal-looking pair of glasses to help those who might have just a small area of vision left, have cloudy or blurry vision, or can’t process detailed images.

The glasses should be appropriate for common types of visual impairment such as age-related macular degeneration and diabetic retinopathy. NHS Choices estimates around 30% of people who are over 75 have early signs of age-related macular degeneration, and about 7% have more advanced forms.

‘The types of poor vision we are talking about are where you might be able to see your own hand moving in front of you, but you can’t define the fingers,’ explains Stephen.

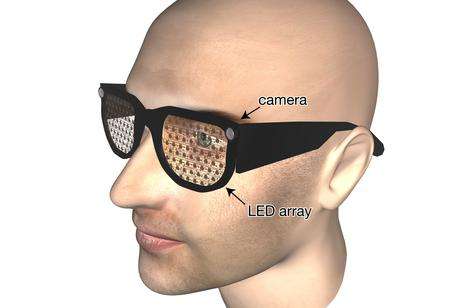

The glasses have video cameras mounted at the corners to capture what the wearer is looking at, while a display of tiny lights embedded in the see-through lenses of the glasses feed back extra information about objects, people or obstacles in view.

In between, a smartphone-type computer running in your pocket recognises objects in the video image or tracks where a person is, driving the lights in the display in real time.

The extra information the glasses display about their surroundings should allow people to navigate round a room, pick out the most relevant things and locate objects placed nearby.

‘The glasses must look discrete, allow eye contact between people and present a simplified image to people with poor vision, to help them maintain independence in life,’ says Stephen. These guiding principles are important for coming up with an aid that is acceptable for people to wear in public, with eye contact being so important in social relationships, he explains.

The see-through display means other people can see you, while different light colours might allow different types of information to be fed back to the wearer, Stephen says. You could have different colours for people, or important objects, and brightness could tell you how near things were.

Stephen even suggests it may be possible for the technology to read back newspaper headlines. He says something called optical character recognition is coming on, so it possible to foresee a computer distinguishing headlines from a video image then have these read back to the wearer through earphones coming with the glasses. A whole stream of such ideas and uses are possible, he suggests. There are barcode readers in some mobile phones that download the prices of products; such barcode and price tag readers could also be useful additions to the glasses.

Stephen believes these hi-tech glasses can be realised for similar costs as smartphones – around £500. For comparison, a guide dog costs around £25-30,000 to train, he estimates.

He adds that people will have to get used to the extra information relayed on the glasses’ display, but that it might be similar to physiotherapy – the glasses will need to be tailored for individuals, their vision and their needs, and it will take a bit of time and practise to start seeing the benefits.

The exhibit at the Royal Society will take visitors through how the technology will work. ‘The primary aim is to simulate the experience of a visual prosthetic to give people an idea of what can be seen and how it might look,’ Stephen says.

A giant screen with video images of the exhibition floor itself will show people-tracking and depth perception at work. Another screen will invite visitors to see how good they are at navigating with this information. A small display added to the lenses of ski goggles should give people sufficient information to find their way round a set of tasks. An early prototype of a transparent LED array for the eventual glasses will also be on display.

All of this is very much at an early stage. The group is still assembling prototypes of their glasses. But as well as being one of the featured stands at the Royal Society’s exhibition, they have funding from the National Institute of Health Research to do a year-long feasibility study and plan to try out early systems with a few people in their own homes later this year.

Provided by Oxford University