July 27, 2010 report

Good conversation results in a 'mind meld'

(PhysOrg.com) -- Researchers studying human conversation have discovered the brains of listeners and speakers become synchronized, and this "neural coupling" makes for effective communication. In essence, the participants’ brains connect in a kind of "mind meld."

Psychologist Uri Hasson from Princeton University wanted to find out which areas of the brain were active during speaking and listening to a conversation to test a hypothesis that there is more overlap between these brain areas than generally assumed. It has been noted, for example, that people taking part in conversations will often subconsciously imitate each other’s grammar, rates of speaking and even gestures and posture.

In the first part of the experiment, graduate student Lauren Silbert placed her head in a functional magnetic resonance imaging (fMRI) machine for fifteen minutes, while she recounted an unrehearsed story from her high-school years.

The research team recorded the story using a microphone capable of filtering out the noise of the fMRI machine, and then in the second part of the experiment, a volunteer had his or her head scanned by the fMRI machine while listening to the recording.

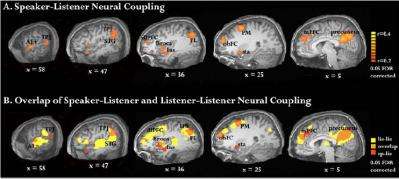

The team found a great deal of synchronization between the activity in Silbert’s brain and in those of the 11 volunteers, with the same regions of the brains lighting up at or near the same points in the story. This finding was surprising, given the long-held belief that speaking and listening use separate areas of the brain. The areas of the brain affected were linked to language, but their exact functions are as yet unknown.

In most areas of the brain the activation pattern appeared one to three seconds after it had appeared in Silbert’s brain, but in a few other areas, including an area in the frontal lobe, the activation pattern appeared in the listeners’ brains before it appeared in Silbert’s, which the researchers thought could represent the listeners anticipating what was coming next in the story.

The researchers then asked the subjects to re-tell the story they had heard, and found there was a positive correlation between the strength of the neural coupling and the volunteer’s ability to recall the story details. Hasson concluded that the “more similar our brain patterns during a conversation, the better we understand each other.”

A third stage in the experiment was designed to ensure the neural coupling was not an experimental artifact. In this stage 11 volunteers - all English speakers - were asked to listen to a story told in Russian, which none of them understood. In this experiment no neural coupling was seen. A final stage of the experiment was to have the graduate student tell a different story while having her brain scanned. The results were then compared to the brain patterns of the listeners of the original story. As with the Russian story, no coupling was seen.

Hasson said the next step in the research is to design an experimental set up in which two subjects can have their brains scanned by fMRI simultaneously while they are having a conversation. He predicted that this would produce especially strong synchronization, and also speculated that neural coupling would be stronger in people talking face-to-face than in conversations over the phone or by video conferencing.

The results are published in the Proceedings of the National Academy of Sciences journal and the paper is available online.

More information: Speaker-listener neural coupling underlies successful communication, Greg J. Stephens et al., PNAS, Published online before print , doi:10.1073/pnas.1008662107

© 2010 PhysOrg.com