Super cool atom thermometer

As physicists strive to cool atoms down to ever more frigid temperatures, they face the daunting task of developing new, reliable ways of measuring these extreme lows. Now a team of physicists has devised a thermometer that can potentially measure temperatures as low as tens of trillionths of a degree above absolute zero. Their experiment is reported in the current issue of Physical Review Letters and highlighted with a Viewpoint in the December 7 issue of Physics.

Physicists can currently cool atoms to a few billionths of a degree, but even this is too hot for certain applications. For example, Richard Feynman dreamed of using ultracold atoms to simulate the complex quantum mechanical behavior of electrons in certain materials. This would require the atoms to be lowered to temperatures at least a hundred times colder than what has ever been achieved. Unfortunately, thermometers that can measure temperatures of a few billionths of a degree rely on physics that doesn't apply at these extremely low temperatures.

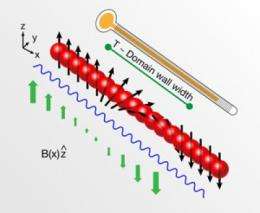

Now a team at the MIT-Harvard Center for Ultra-Cold Atoms has developed a thermometer that can work in this unprecedentedly cold regime. The trick is to place the system in a magnetic field, and then measure the atoms' average magnetization. By determining a handful of easily-measured properties, the physicists extracted the temperature of the system from the magnetization. While they demonstrated the method on atoms cooled to one billionth of a degree, they also showed that it should work for atoms hundreds of times cooler, meaning the thermometer will be an invaluable tool for physicists pushing the cold frontier.

More information: Spin Gradient Thermometry for Ultracold Atoms in Optical Lattices, David M. Weld, Patrick Medley, Hirokazu Miyake, David Hucul, David E. Pritchard, and Wolfgang Ketterle, Phys. Rev. Lett. 103, 245301 (2009) - Published December 07, 2009, Download PDF (free)

Source: American Physical Society