Scientists create first electronic quantum processor

A team led by Yale University researchers has created the first rudimentary solid-state quantum processor, taking another step toward the ultimate dream of building a quantum computer.

They also used the two-qubit superconducting chip to successfully run elementary algorithms, such as a simple search, demonstrating quantum information processing with a solid-state device for the first time. Their findings will appear in Nature's advanced online publication June 28.

"Our processor can perform only a few very simple quantum tasks, which have been demonstrated before with single nuclei, atoms and photons," said Robert Schoelkopf, the William A. Norton Professor of Applied Physics & Physics at Yale. "But this is the first time they've been possible in an all-electronic device that looks and feels much more like a regular microprocessor."

Working with a group of theoretical physicists led by Steven Girvin, the Eugene Higgins Professor of Physics & Applied Physics, the team manufactured two artificial atoms, or qubits ("quantum bits"). While each qubit is actually made up of a billion aluminum atoms, it acts like a single atom that can occupy two different energy states. These states are akin to the "1" and "0" or "on" and "off" states of regular bits employed by conventional computers. Because of the counterintuitive laws of quantum mechanics, however, scientists can effectively place qubits in a "superposition" of multiple states at the same time, allowing for greater information storage and processing power.

For example, imagine having four phone numbers, including one for a friend, but not knowing which number belonged to that friend. You would typically have to try two to three numbers before you dialed the right one. A quantum processor, on the other hand, can find the right number in only one try.

"Instead of having to place a phone call to one number, then another number, you use quantum mechanics to speed up the process," Schoelkopf said. "It's like being able to place one phone call that simultaneously tests all four numbers, but only goes through to the right one."

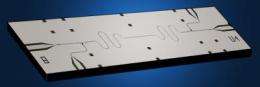

These sorts of computations, though simple, have not been possible using solid-state qubits until now in part because scientists could not get the qubits to last long enough. While the first qubits of a decade ago were able to maintain specific quantum states for about a nanosecond, Schoelkopf and his team are now able to maintain theirs for a microsecond—a thousand times longer, which is enough to run the simple algorithms. To perform their operations, the qubits communicate with one another using a "quantum bus"—photons that transmit information through wires connecting the qubits—previously developed by the Yale group.

The key that made the two-qubit processor possible was getting the qubits to switch "on" and "off" abruptly, so that they exchanged information quickly and only when the researchers wanted them to, said Leonardo DiCarlo, a postdoctoral associate in applied physics at Yale's School of Engineering & Applied Science and lead author of the paper.

Next, the team will work to increase the amount of time the qubits maintain their quantum states so they can run more complex algorithms. They will also work to connect more qubits to the quantum bus. The processing power increases exponentially with each qubit added, Schoelkopf said, so the potential for more advanced quantum computing is enormous. But he cautions it will still be some time before quantum computers are being used to solve complex problems.

"We're still far away from building a practical quantum computer, but this is a major step forward."

More information: www.nature.com/nature/journal/ … ull/nature08121.html

Source: Yale University (news : web)