From 3-D to 6-D: Researchers developing super-realistic image system

(PhysOrg.com) -- By producing "6-D" images, an MIT professor and colleagues are creating unusually realistic pictures that not only have a full three-dimensional appearance, but also respond to their environment, producing natural shadows and highlights depending on the direction and intensity of the illumination around them.

The process can also be used to create images that change over time as the illumination changes, resulting in animated pictures that move just from changes in the sun's position, with no electronics or active control.

To create "the ultimate synthetic display," says Ramesh Raskar, an associate professor at the MIT Media Lab, "the display should respond not just to a change in viewpoint, but to changes in the surrounding light."

Raskar and his colleagues will describe the system, which is based entirely on an arrangement of lenses and screens, on Aug. 11 at the annual SIGGRAPH (Special Interest Group on Graphics and Interactive Techniques) conference of the Association for Computing Machinery held in Los Angeles.

Three-dimensional images, created using a variety of systems that make separate images for each eye, have been around for many decades. The new MIT process could bring an unprecedented degree of realism to such images.

The basic concept is similar to those inexpensive 3-D displays sometimes used on postcards and novelty items, that use an overlay of plastic that contains a series of parallel linear lenses that create a visible set of vertical lines over the image. (It is a different approach from that used to create holograms, which require laser light to create.) In addition to three-dimensional images, these are sometimes used to present a series of images that change as you view them from different angles from side to side. This can simulate simple motion, such as a car moving along a road.

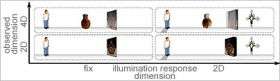

By using an array of tiny square lenses instead of the linear ones, such displays can also be made to change as you change the viewing angle up or down - making a "4-D" image. This reveals different views with horizontal as well as vertical movement of the viewer. The new "lighting aware" system adds additional layers of lenses and screens to add two more dimensions of change. The image that is seen is then not only based on the position of the viewer, but also on the direction of the illumination.

In an initial test of the principle, Raskar and his team created an image of a glass wine bottle, whose caustic (a term for the collection of light rays coming from a curved surface), shadows and highlights change with the illumination.

"Even if you have the best hologram out there," explains Raskar, when the angle of the light changes "if I have a hologram of a flower, and a real flower next to it, the hologram doesn't look real. All the shadows and all the reflections on that flower are not mimicked on that hologram."

The new system, still in a relatively low-resolution laboratory proof-of-concept, could have applications including pictures used for training purposes, he said. In training someone how to carry out industrial inspections, an image of the device to be inspected would respond just like a real object when the inspector shines lights on it from different angles, for example.

Because the system is being built by hand from custom-made parts, Raskar says, the present version costs about $30 per pixel to make. Since it takes thousands of pixels to create a recognizable image, practical devices at an affordable price will require significant further development. "It will be at least 10 years before we have any realistic practical-sized displays," he estimates.

The main applications ultimately would be for advertising and for entertainment, Raskar says. A similar system could even be adapted to produce motion pictures and moving computer displays as well, he says.

The research was done in collaboration with Martin Fuchs, Hans-Peter Seidel, and Hendrik P.A. Lensch, all of MPI Informatik, The work was partly funded by Mitsubishi Electric Research Laboratories.

Provided by MIT